Compliant and complete clickstream data for data engineers

Stop building on sand: When you engineer data pipelines for clickstream data, legal and technical limitations erode it. Our privacy-first approach captures as much data as legally and technically, supplemented by synthetic data points, possible to provide you with one central, rock-solid foundation to build upon.

Avoid this problem: Breaking/incorrect data and the risk of fines

If you have to work with incomplete data, any data that you provide to stakeholders will be incomplete by design. Additionally, blind spots and changes can easily lead to incorrect or breaking data downstream.

If the data’s legal compliance is ignored, your organization can lose money on data activities that haven’t earned any money yet. Also, no executive enjoys getting caught using data illegally due to the reputational damage, so they may scale back analytics operations out of caution.

Our approach: Analytics data that is both compliant and complete

Contrary to popular belief, lawful data doesn’t mean less data. It can still be complete, but it requires a much more sophisticated approach:

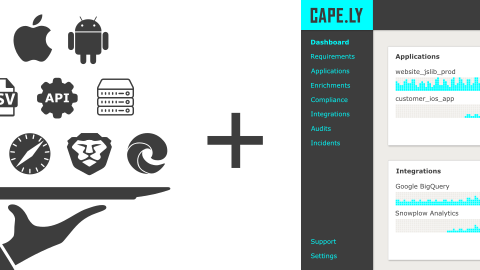

We call it the Data Cape because of its ability to resist data erosion and data loss, similar to how a geological cape is able to resist land erosion.

Likewise, a data cape provides a solid foundation that can withstand even the worst storm, or in other words: Your data pipelines will always contain the data you expect and rely on.

The outcome: No more data worries, you can focus on data pipelines

Data engineering is a full-time job, often even more than that, and requires the ability to work on tight deadlines and really focus on a lot of details. We know the many problems data can cause and try to eliminate all of them for you.

We have made it our mission to provide stakeholders with the best data possible: Our offering is unique in that it not only includes our compliance platform, but also corresponding implementation services to give customers peace of mind.