Cape.ly solves the data industry’s biggest problem with technology based on 10+ years of experience

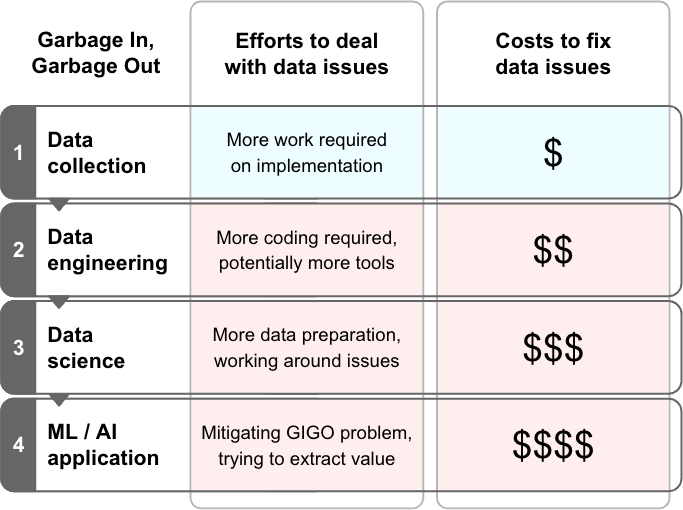

“Garbage in, garbage out” refers to the fact that low-quality inputs (source data) can’t produce high-quality outputs. User event data, also known as analytics or clickstream data, is the hardest to get right, but it is absolutely necessary to answer the why, not just the what.

While data professionals are well aware of this issue, most vendors prefer not to talk about the open secret that their solutions usually require high-quality data to work really well, with the exception of data quality tools, obviously. 😉

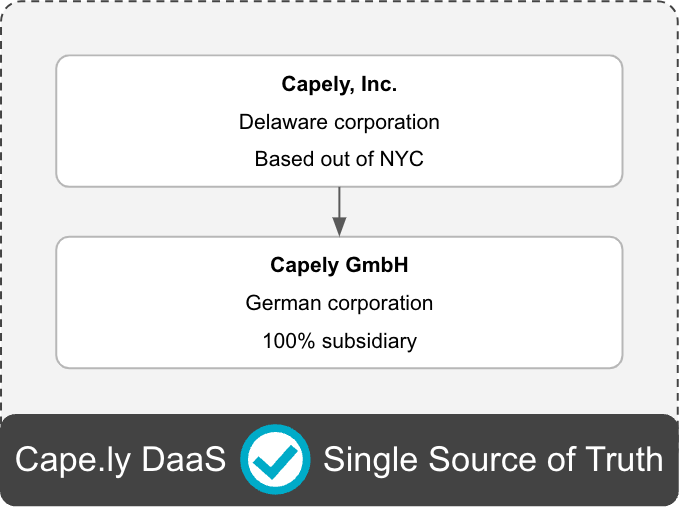

We are building technology to solve this issue and provide everyone and every tool with data from a central, high-quality source. The US company is based out of New York City and has a German subsidiary.

Companies are losing billions of dollars and their best employees due to data issues

Any data professional can confirm that the further downstream data teams attempt to fix data issues, the higher the cost and the less likely it is that the data can actually be fixed, thus failing data initiatives and costing companies billions of dollars.

Typically this is a tedious manual process, and we have more than a decade of experience doing that. We have taken everything we learned to develop a technological solution to the problem.

Our technology is based on more than a decade of experience and produces reliable, high-quality data. The data is provided as a service and can be consumed by any number of vendor solutions, data stacks, or data teams.

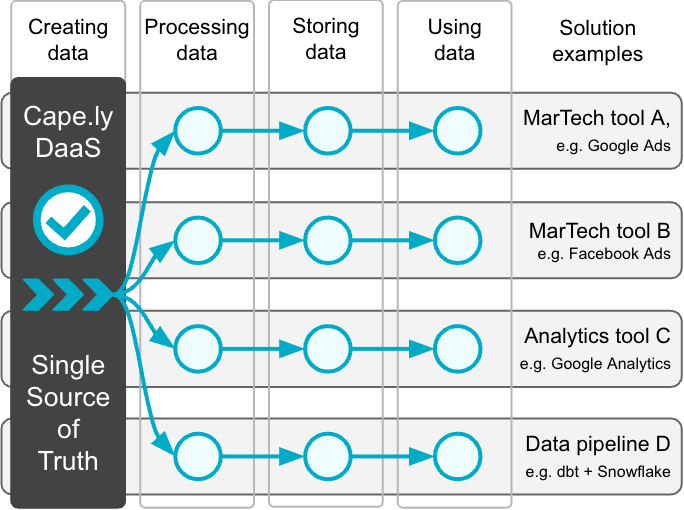

A new and modern approach to collecting and using event data: Single Source of Truth by design

From a data architecture perspective, analytics tools, data stacks, and marketing technologies are all just data pipelines that consist of the same components:

- Creating / collecting data

- Processing data

- Storing data

- Using data / creating value

Instead of having all these solutions create redundant, usually inconsistent, and often low-quality data, it’s better to create data only once and focus on its quality.

With our DaaS, you can stream the same data into different MarTech tools, analytics solutions, and data pipelines to ensure overall consistency and remove redundancies.

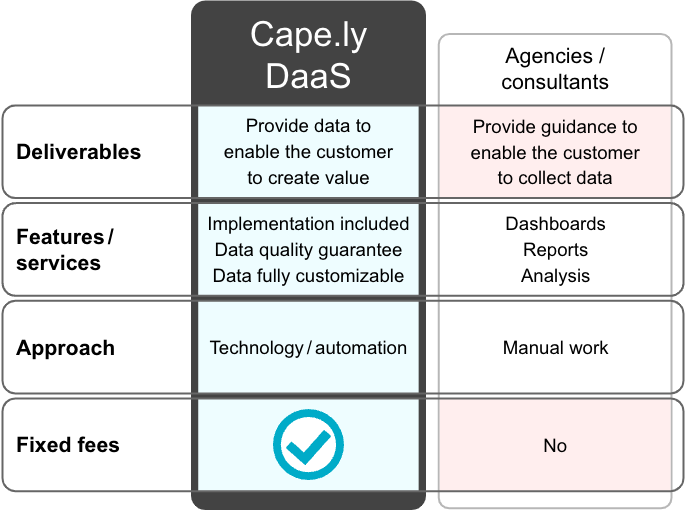

The difference between DaaS and agencies / consultants

More than a decade of consulting and helping clients with their data issues has made us realize that the approach to collect data to create value is inherently broken.

Most companies work with their service providers on their data collection together. Unfortunately, shared responsibilities don’t work for increasingly complex implementations.

Instead, we recommend to make the data and its quality the responsibility of just one party so that the deliverable can be measured, and somebody can be held accountable.

Our DaaS comes with a data quality guarantee and can be used by you and your partners. We believe that agencies create a lot of value, but data creation requires undivided attention.

For over a decade now, I have architected analytics implementations and debugged them at the network and source code level to deliver the best event data possible.

My work with medium-sized to large companies in North America and Europe has provided me with a wealth of experience and a unique combination of traits:

- Stereotypical German obsession for quality

- Stereotypical Canadian kindness

- Company in the business and data hub New York City

I believe very few have as deep an understanding of all the client-side and server-side technological details that affect data quality and reliability as I and other team members do.

You can find more information about past projects on my profiles on Cape.ly and LinkedIn, or on my personal website.